This reference covers all service configuration options: templates, environment variables, resource allocation, scaling, and secrets management.Documentation Index

Fetch the complete documentation index at: https://docs.suga.app/llms.txt

Use this file to discover all available pages before exploring further.

Templates

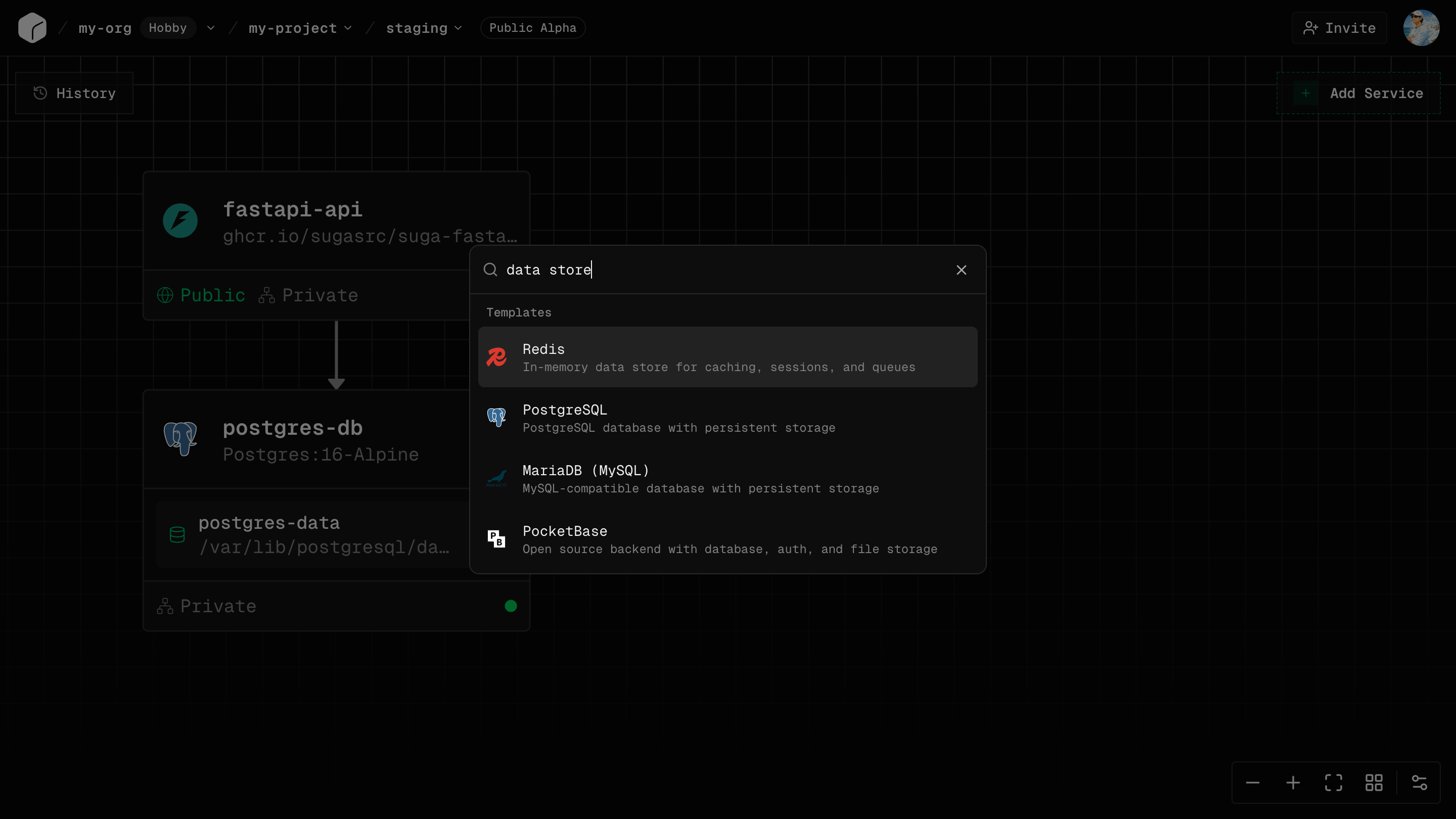

Templates are pre-configured services with production-ready defaults.

What Templates Provide

- Docker images with tested versions

- Environment variables with validation and auto-generation

- Networking and volumes configured automatically

- Multi-service stacks with connections pre-wired

Using a Template

- Open the templates panel

- Select a template to add to your canvas

- Fill required fields (passwords can be auto-generated)

- Customize as needed (resources, env vars, networking)

- Deploy

Multi-Service Templates

Some templates deploy complete stacks (app + database + cache) with all connections configured automatically, including service-to-service networking and shared environment variables.Environment Variables

Environment variables configure your services at runtime.Adding Variables

- Select service → Config tab → Environment Variables

- Click “Add Variable”

- Enter key (e.g.,

DATABASE_URL), value, and check “Sensitive” for secrets - Deploy to apply

Common Variables

Accessing in Code

Node.js:process.env.DATABASE_URL

Python: os.environ.get('DATABASE_URL')

Go: os.Getenv("DATABASE_URL")

Deno: Deno.env.get("DATABASE_URL")

Environment-Specific Values

Each environment has independent variables. Production and staging can have different database URLs, API keys, and feature flags without leaking between environments.Resources

Resources define CPU and memory allocation per service.CPU

| Cores | Best For |

|---|---|

| 0.25 | Small APIs, dev environments |

| 0.5 | Lightweight services, workers |

| 1 | Standard web applications |

| 2 | Medium traffic applications |

| 4 | High traffic, CPU-intensive |

Memory

| Memory | Best For |

|---|---|

| 256 MiB | Tiny services |

| 512 MiB | Small applications |

| 1 GiB | Standard applications |

| 2 GiB | Medium applications |

| 4 GiB | Large apps, databases |

| 8 GiB | Very large applications |

CPU and Memory Ratio

Suga Cloud requires per-service memory to fall within 1 GiB to 6.5 GiB per CPU core. If you configure resources outside this ratio, the smaller value is rounded up automatically, which can result in higher than expected billing. Always set CPU and memory together.| CPU | Valid Memory Range |

|---|---|

| 0.25 cores | 256 MiB – 1.625 GiB |

| 0.5 cores | 512 MiB – 3.25 GiB |

| 1 core | 1 GiB – 6.5 GiB |

| 2 cores | 2 GiB – 13 GiB |

| 4 cores | 4 GiB – 26 GiB |

Plan Limits

Per-service maximums and organization-wide pool budgets vary by plan. See Plan Limits for full details.Scaling

Scale applications by adding resources (vertical) or replicas (horizontal).Replicas

Replicas are identical copies of your service running simultaneously:- Load Balancing - Traffic distributed automatically

- Redundancy - If one fails, others continue serving

- Rolling Updates - New versions deploy gradually

Setting Replicas

- Select service → Config tab → Replicas

- Set number (e.g., 1, 2, 3, 5)

- Deploy

Volume Limitation

Keep databases at 1 replica and scale the stateless application services that connect to them.Cost

Replicas multiply resource costs:- 1 replica with 1 CPU: 1x cost

- 3 replicas with 1 CPU: 3x cost

Vertical Autoscaling

Enable autoscaling on a service to let Suga Cloud adjust CPU and memory between bounds you set, based on actual usage:- Minimum: the baseline allocation, always reserved

- Maximum: the ceiling Suga will scale to under load

For autoscaling services, the maximum values count toward your organization’s resource pool. Suga reserves the burst ceiling rather than the baseline. See Plan Limits.

Common Questions

Can I share environment variables between services?

Can I share environment variables between services?

Can I change resources without redeploying?

Can I change resources without redeploying?

No, resource changes require a new deployment.

Can I horizontally scale services with volumes?

Can I horizontally scale services with volumes?

No, services with volumes must have exactly 1 replica.

What happens if I exceed CPU limits?

What happens if I exceed CPU limits?

Service gets throttled (slowed down) but doesn’t crash.

What happens if I exceed memory limits?

What happens if I exceed memory limits?

Service may be killed (OOMKilled) and restarted. Increase memory to prevent this.